For two decades, data center networks were designed primarily for north-south traffic: users outside the facility sending requests in, servers processing them, and responses flowing back out. In 2026, the dominant performance constraint is east-west traffic — the horizontal movement of data between servers, applications, containers, and GPU clusters inside the same facility.

Three forces have accelerated this shift:

Container and Microservices Architectures: Application components now generate network packets as they call across service boundaries. Modern multi-tier architectures decompose monoliths into microservices running in different server pods.

AI and GPU Cluster Workloads: Large AI training jobs generate massive east-west traffic during gradient synchronization across hundreds of GPUs. A single run can generate terabytes of traffic per hour, turning network inefficiency into expensive GPU idle time.

Multi-cloud and Hybrid Architectures: Workloads spanning on-premise and cloud environments require high-bandwidth internal paths to move data efficiently.

2. The Leaf-Spine Architecture: Making East-West Traffic Scalable

2.1 Why Leaf-Spine is the Baseline

The leaf-spine topology (Clos fabric) provides consistent hop counts and multiple equal-cost paths.

Every leaf switch connects to every spine switch.

East-west traffic traverses exactly two hops: Leaf → Spine → Leaf.

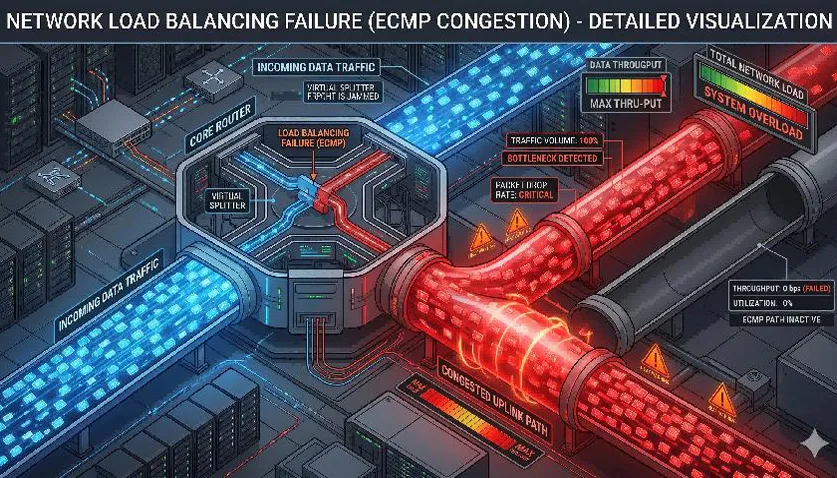

ECMP (Equal-Cost Multi-Path) distributes flows across all available uplinks.

2.2 Sizing the Fabric: Oversubscription Targets

| Deployment Size | Leaf Switch | Spine Switch | Uplink Speed | Typical Oversubscription |

|---|

| Small (<200 servers) | IRSL3LM-04X24GP-2D-Z8 | CSL3M-04X48GP-H2A-L | 25G SFP28 | 1:4 to 1:6 |

| Medium (200-1000 servers) | IRSL3LM-04X24GP-2D-Z8 | CSL3M-04X48GP-H2A-L (x2) | 100G QSFP28 | 1:2 to 1:4 |

| Large (AI Workloads) | CSL3M-04X48GP-H2A-L | CSL3M-04X48GP-H2A-L (x4+) | 100G or 400G | 1:1 or 1:2 |

2.3 Physical Layer Considerations

3. Controlling Congestion: ECMP, ECN, and RoCE

3.1 Flowlet-based ECMP

Standard hashing can cause "hot spots" when large elephant flows (like storage backups) collide on the same link. Flowlet-aware ECMP (supported by the Lanbras CSL3M-04X48GP-H2A-L) fragments these flows into bursts, allowing the ASIC to redistribute them across alternate paths more effectively.

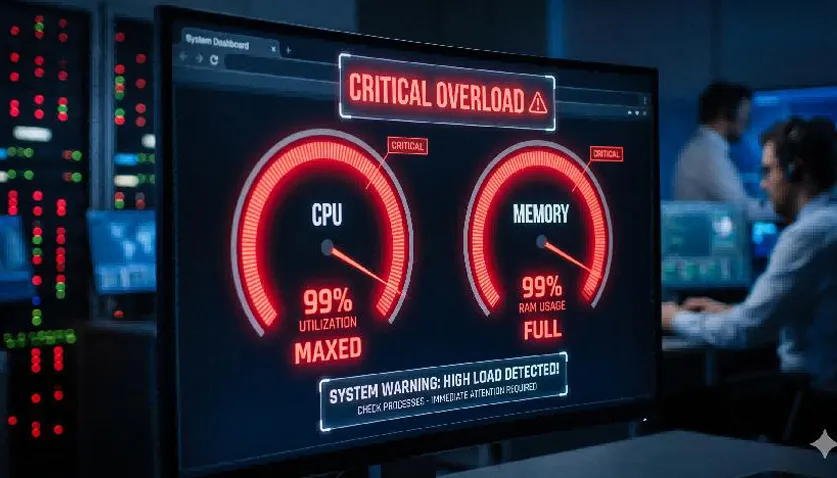

3.2 ECN (Explicit Congestion Notification)

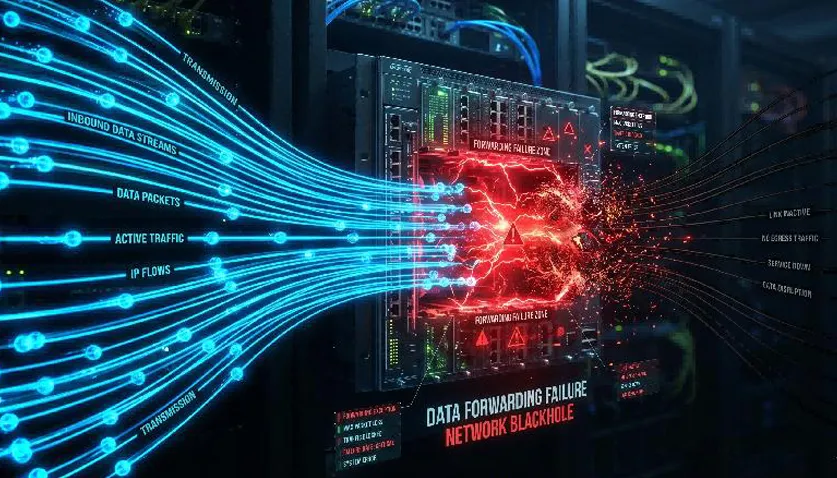

ECN marks packets rather than dropping them when buffers fill up. The end host's TCP stack then slows down transmission, stabilizing the network before overflow occurs.

3.3 RoCEv2 (RDMA over Converged Ethernet)

RoCEv2 is critical for AI clusters, providing microsecond latency. It requires a lossless fabric via:

4. QoS, Micro-Segmentation, and Telemetry

4.1 QoS Design for Mixed Workloads

| Traffic Class | Source | Priority | Queue Treatment |

|---|

| RoCE/Storage | GPU Clusters, NVMe-oF | Highest (PFC) | Priority Queue + ECN |

| API Calls | Microservices | High | Short Queue, Low Latency |

| General Compute | Standard Workloads | Medium | Default Queue |

| Batch/Backup | Replication Jobs | Low | WDRR Scheduling |

4.2 Micro-Segmentation & Telemetry

Micro-segmentation restricts workloads to required paths, reducing broadcast flooding. Simultaneously, Streaming Telemetry via the LanbrasView NMS allows operators to see queue depths and ECMP heatmaps in real-time.

5. Lanbras Data Center Switch Configuration Examples

5.1 QoS Configuration: DSCP-Based Classification

IRSL3LM04X24GP(config)# class-map match-any ROCE-TRAFFIC

IRSL3LM04X24GP(config-cmap)# match dscp 34

IRSL3LM04X24GP(config-cmap)# exit

IRSL3LM04X24GP(config)# policy-map QoS-POLICY

IRSL3LM04X24GP(config-pmap)# class ROCE-TRAFFIC

IRSL3LM04X24GP(config-pmap-c)# set dscp 34

IRSL3LM04X24GP(config-pmap-c)# priority

IRSL3LM04X24GP(config)# interface range gi1/0/1-24

IRSL3LM04X24GP(config-if-range)# service-policy input QoS-POLICY

5.2 PFC Configuration for Lossless Fabric

CSL3M04X48GP(config)# vlan 100 name ROCE-FABRIC

CSL3M04X48GP(config)# interface range gi1/0/1-24

CSL3M04X48GP(config-if-range)# dcbx mode ieee

CSL3M04X48GP(config-if-range)# priority-flow-control mode on

CSL3M04X48GP(config-if-range)# priority-flow-control priority 3 no-drop

5.3 ECN Configuration

IRSL3LM04X24GP(config)# ecn enable

IRSL3LM04X24GP(config)# ecn threshold queue 0 50 75

6. Lanbras Product Comparison

| Product | Port Config | Uplinks | Best Fit |

|---|

| IRSL3LM-04X24GP-2D-Z8 | 24x GE | 4x 25G/100G | Leaf switch for mid-size DC |

| CSL3M-04X48GP-H2A-L | 48x GE | 4x 100G QSFP28 | Spine switch / AI Leaf |

| LA-OT-10G3-1.4 | SFP28 | 10G SR/LR | Leaf-Spine optics |

| 2x100GBASE-SR4 | QSFP28 | 100G SR4/LR4 | Spine aggregation optics |

Ready to Optimize Your Data Center Fabric?

Keywords: data center switch, east-west traffic, leaf-spine architecture, ECMP, ECN, PFC, RoCEv2, AI cluster networking, Lanbras CSL3M-04X48GP-H2A-L, data center fabric, QoS, RDMA.

By Lanbras

Lanbras specializes in translating cutting-edge optical and Ethernet transmission technologies into clear, valuable insights that help our customers stay ahead in a fast-evolving digital world.

By turning complex technical concepts into practical, business-driven content, we aim to empower decision-makers with the knowledge they need to make confident, future-ready choices.

Internet Data Center

Internet Data Center FAQs

FAQs Industry News

Industry News About Us

About Us Data Center Switch

Data Center Switch  Enterprise Switch

Enterprise Switch  Industrial Switch

Industrial Switch  Access Switch

Access Switch  Integrated Network

Integrated Network  Optical Module & Cable

Optical Module & Cable

Call us on:

Call us on:  Email Us:

Email Us:  2106B, #3D, Cloud Park Phase 1, Bantian, Longgang, Shenzhen, 518129, P.R.C.

2106B, #3D, Cloud Park Phase 1, Bantian, Longgang, Shenzhen, 518129, P.R.C.